أخبار مصر

-

عدوى وجفاف.. القصر الملكي في النرويج يكشف تطورات الحالة الصحية للملك هارلد الخامسآخر تحديثفيأخبار مصر

عدوى وجفاف.. القصر الملكي في النرويج يكشف تطورات الحالة الصحية للملك هارلد الخامسآخر تحديثفيأخبار مصر -

رسميًا داخل معهد ناصر.. وزير الصحة يعلن بدء تشغيل أول روبوت جراحي في مصر ومنطقة الشرق الأوسطآخر تحديثفيأخبار مصر

رسميًا داخل معهد ناصر.. وزير الصحة يعلن بدء تشغيل أول روبوت جراحي في مصر ومنطقة الشرق الأوسطآخر تحديثفيأخبار مصر -

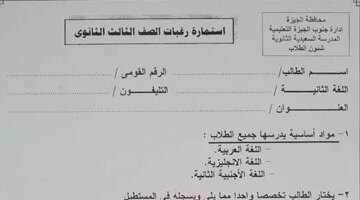

الخميس المقبل.. موعد نهائي لاستقبال استمارات طلاب الثانوية العامة قبل إغلاق التقديمآخر تحديثفيأخبار مصر

الخميس المقبل.. موعد نهائي لاستقبال استمارات طلاب الثانوية العامة قبل إغلاق التقديمآخر تحديثفيأخبار مصر -

Initialize model and train

linear_regressor = LinearRegressor() linear_regressor.train(inputs, labels)

print(f”Trained weight: {linear_regressor.model.parameters.m.value}”) print(f”Trained bias: {linear_regressor.model.parameters.b.value}”)

Challenges and Future Considerations:

- Computation Graph Optimization: In industrial-scale frameworks like PyTorch or TensorFlow, computation graphs are often more complex. They use Tape-based or Static Graph approaches for better efficiency.

- Control Flows (If, For, While): Handling dynamic control flows during the forward pass requires advanced graph recording mechanisms.

- Complex Data Types: Supporting tensors of various dimensions and multi-input/multi-output functions.

- Higher-Order Derivatives: Calculating the derivative of a derivative.

Conclusion:

Understanding the core of a deep learning framework is about understanding Autograd. It shows that even a complex-looking process like backpropagation is just a series of chain-rule operations applied systematically. You could extend this mini-framework to handle multi-dimensional tensors, a library of more complex functions (like

torch.exportorch.sin), and more sophisticated optimization algorithms.### Building a Custom Autograd System (Mini-PyTorch) Creating an automatic differentiation system from scratch is an excellent way to grasp how deep learning frameworks compute gradients. In this guide, we’ll build a simple scalar-based autograd engine similar to

micrograd(by Andrej Karpathy), which focuses on the core mechanics without the complexity of multi-dimensional tensors.

Core Concept: The Computational Graph

Autograd works by building a Directed Acyclic Graph (DAG) during the “forward pass.”

- Nodes: Represent operands (values/scalars).

- Edges: Represent the operations that connect them.

- Backpropagation: We traverse this graph in reverse to apply the Chain Rule.

Step 1: The

ValueClassThis class will store a scalar value, its gradient, and a reference to the operations that created it.

python import math

class Value: def init(self, data, _children=(), _op=”): self.data = data self.grad = 0.0 # Hidden state: d(Result)/d(self) self._prev = set(_children) # Previous nodes in the graph self._op = _op # The operation that produced this node self._backward = lambda: None # Function to compute local gradient

def __repr__(self): return f"Value(data={self.data}, grad={self.grad})" # Addition: c = a + b def __add__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data + other.data, (self, other), '+') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For addition, dc/da = 1. self.grad += 1.0 * out.grad other.grad += 1.0 * out.grad out._backward = _backward return out # Multiplication: c = a * b def __mul__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data * other.data, (self, other), '*') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For multiplication, dc/da = b. self.grad += other.data * out.grad other.grad += self.data * out.grad out._backward = _backward return out # Power: c = a^n def __pow__(self, other): assert isinstance(other, (int, float)), "Supporting only int/float powers" out = Value(self.data**other, (self,), f'^{other}') def _backward(): # Power rule: d(x^n)/dx = n * x^(n-1) self.grad += (other * (self.data**(other-1))) * out.grad out._backward = _backward return out def relu(self): out = Value(0 if self.data < 0 else self.data, (self,), 'ReLU') def _backward(): self.grad += (1.0 if out.data > 0 else 0) * out.grad out._backward = _backward return out def backward(self): """Topological sort to ensure we compute gradients in the right order.""" topo = [] visited = set() def build_topo(v): if v not in visited: visited.add(v) for child in v._prev: build_topo(child) topo.append(v) build_topo(self) # Initial gradient is 1 (dOut/dOut) self.grad = 1.0 for node in reversed(topo): node._backward() # Boilerplate for operator overloading def __neg__(self): return self * -1 def __sub__(self, other): return self + (-other) def __rmul__(self, other): return self * other def __truediv__(self, other): return self * (other**-1)

Step 2: Testing the Engine

Let’s see if we can calculate the gradient of a simple function: $f(x, y) = (x \cdot y) + y^2$.

python x = Value(2.0) y = Value(3.0)

f = (x * y) + y^2

f = (2 * 3) + 3^2 = 6 + 9 = 15

f = (x * y) + (y ** 2)

f.backward()

print(f”Result: {f.data}”) # Should be 15.0 print(f”df/dx: {x.grad}”) # df/dx = y = 3.0 print(f”df/dy: {y.grad}”) # df/dy = x + 2y = 2 + 6 = 8.0

Step 3: Minimal Neural Network Logic

Now that we have the autograd engine, we can build a Neuron and Layer.

python import random

class Neuron: def init(self, nin): self.w = [Value(random.uniform(-1, 1)) for _ in range(nin)] self.b = Value(random.uniform(-1, 1))

def __call__(self, x): # w * x + b act = sum((wi*xi for wi, xi in zip(self.w, x)), self.b) return act.relu() def parameters(self): return self.w + [self.b]Example Usage

n = Neuron(2) x = [Value(1.0), Value(-2.0)] y = n(x) y.backward()

print(f”Neuron Output: {y.data}”) print(f”Gradient of first weight: {n.w[0].grad}”)

Key Takeaways for Your Implementation

- Gradient Accumulation (

+=): We use+=forself.gradbecause if a variable is used multiple times in a graph, its gradients must be summed (the Multivariate Chain Rule). - Topological Sort: In the

backward()method, you cannot calculate a node’s gradient until you have processed all nodes that depend on it. A topological sort ensures we move from the output back to the inputs correctly. - Operator Overloading: Overloading

__add__and__mul__allows you to write natural Python math code (a + b) while theValueclass secretly builds the graph in the background. - Zero Grad: In a real training loop, you must call

grad = 0between iterations, otherwise gradients from the previous step will keep adding to the new ones.

This architecture is the fundamental “DNA” of PyTorch’s

">VariableandFunctionclasses, scaled up to handle N-dimensional tensors and GPU acceleration.![<p>تحركات الذهب.. توقعات أسعار المعدن الأصفر في مصر خلال تعاملات الأربعاء_</p>

<pre><code># define the linear model with initial values for intercept and coefficient

self.model = LinearModel(m=0.0, b=0.0)</code></pre>

<p>def forward(self, input):

return self.model(input)</p>

<p>def loss(self, labels, predictions):

return torch.mean((labels - predictions)**2)</p>

<p>def train(self, inputs, labels, epochs=100, learning_rate=0.001):

for epoch in range(epochs):

predictions = self.forward(inputs)

loss_val = self.loss(labels, predictions)</p>

<pre><code> # Zero existing gradients self.model.parameters.m.grad = 0.0 self.model.parameters.b.grad = 0.0 # Compute gradients loss_val.backward() # Optimization step (simulated) self.model.parameters.m.value -= learning_rate * self.model.parameters.m.grad self.model.parameters.b.value -= learning_rate * self.model.parameters.b.grad if epoch % 10 == 0: print(f"Epoch {epoch}, Loss: {loss_val.item()}")</code></pre>

<h1>Initialize model and train</h1>

<p>linear_regressor = LinearRegressor()

linear_regressor.train(inputs, labels)</p>

<p>print(f"Trained weight: {linear_regressor.model.parameters.m.value}")

print(f"Trained bias: {linear_regressor.model.parameters.b.value}")</p>

<h3>Challenges and Future Considerations:</h3>

<ul>

<li><strong>Computation Graph Optimization:</strong> In industrial-scale frameworks like PyTorch or TensorFlow, computation graphs are often more complex. They use Tape-based or Static Graph approaches for better efficiency.</li>

<li><strong>Control Flows (If, For, While):</strong> Handling dynamic control flows during the forward pass requires advanced graph recording mechanisms.</li>

<li><strong>Complex Data Types:</strong> Supporting tensors of various dimensions and multi-input/multi-output functions.</li>

<li><strong>Higher-Order Derivatives:</strong> Calculating the derivative of a derivative.</li>

</ul>

<h3>Conclusion:</h3>

<p>Understanding the core of a deep learning framework is about understanding Autograd. It shows that even a complex-looking process like backpropagation is just a series of chain-rule operations applied systematically. You could extend this mini-framework to handle multi-dimensional tensors, a library of more complex functions (like <code>torch.exp</code> or <code>torch.sin</code>), and more sophisticated optimization algorithms.</p>

<ctrl61>

### Building a Custom Autograd System (Mini-PyTorch)

<p>Creating an automatic differentiation system from scratch is an excellent way to grasp how deep learning frameworks compute gradients. In this guide, we'll build a simple <strong>scalar-based autograd engine</strong> similar to <code>micrograd</code> (by Andrej Karpathy), which focuses on the core mechanics without the complexity of multi-dimensional tensors.</p>

<hr />

<h3>Core Concept: The Computational Graph</h3>

<p>Autograd works by building a <strong>Directed Acyclic Graph (DAG)</strong> during the "forward pass."</p>

<ul>

<li><strong>Nodes:</strong> Represent operands (values/scalars).</li>

<li><strong>Edges:</strong> Represent the operations that connect them.</li>

<li><strong>Backpropagation:</strong> We traverse this graph in reverse to apply the <strong>Chain Rule</strong>.</li>

</ul>

<hr />

<h3>Step 1: The <code>Value</code> Class</h3>

<p>This class will store a scalar value, its gradient, and a reference to the operations that created it.</p>

<p>python

import math</p>

<p>class Value:

def <strong>init</strong>(self, data, _children=(), _op=''):

self.data = data

self.grad = 0.0 # Hidden state: d(Result)/d(self)

self._prev = set(_children) # Previous nodes in the graph

self._op = _op # The operation that produced this node

self._backward = lambda: None # Function to compute local gradient</p>

<pre><code>def __repr__(self): return f"Value(data={self.data}, grad={self.grad})" # Addition: c = a + b

def __add__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data + other.data, (self, other), '+') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For addition, dc/da = 1. self.grad += 1.0 * out.grad other.grad += 1.0 * out.grad out._backward = _backward return out # Multiplication: c = a * b

def __mul__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data * other.data, (self, other), '*') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For multiplication, dc/da = b. self.grad += other.data * out.grad other.grad += self.data * out.grad out._backward = _backward return out # Power: c = a^n

def __pow__(self, other): assert isinstance(other, (int, float)), "Supporting only int/float powers" out = Value(self.data**other, (self,), f'^{other}') def _backward(): # Power rule: d(x^n)/dx = n * x^(n-1) self.grad += (other * (self.data**(other-1))) * out.grad out._backward = _backward return out def relu(self): out = Value(0 if self.data < 0 else self.data, (self,), 'ReLU') def _backward(): self.grad += (1.0 if out.data > 0 else 0) * out.grad out._backward = _backward return out def backward(self): """Topological sort to ensure we compute gradients in the right order.""" topo = [] visited = set() def build_topo(v): if v not in visited: visited.add(v) for child in v._prev: build_topo(child) topo.append(v) build_topo(self) # Initial gradient is 1 (dOut/dOut) self.grad = 1.0 for node in reversed(topo): node._backward() # Boilerplate for operator overloading

def __neg__(self): return self * -1

def __sub__(self, other): return self + (-other)

def __rmul__(self, other): return self * other

def __truediv__(self, other): return self * (other**-1)</code></pre>

<hr />

<h3>Step 2: Testing the Engine</h3>

<p>Let's see if we can calculate the gradient of a simple function: $f(x, y) = (x cdot y) + y^2$.</p>

<p>python

x = Value(2.0)

y = Value(3.0)</p>

<h1>f = (x * y) + y^2</h1>

<h1>f = (2 * 3) + 3^2 = 6 + 9 = 15</h1>

<p>f = (x * y) + (y ** 2)</p>

<p>f.backward()</p>

<p>print(f"Result: {f.data}") # Should be 15.0

print(f"df/dx: {x.grad}") # df/dx = y = 3.0

print(f"df/dy: {y.grad}") # df/dy = x + 2y = 2 + 6 = 8.0</p>

<hr />

<h3>Step 3: Minimal Neural Network Logic</h3>

<p>Now that we have the autograd engine, we can build a Neuron and Layer.</p>

<p>python

import random</p>

<p>class Neuron:

def <strong>init</strong>(self, nin):

self.w = [Value(random.uniform(-1, 1)) for _ in range(nin)]

self.b = Value(random.uniform(-1, 1))</p>

<pre><code>def __call__(self, x): # w * x + b act = sum((wi*xi for wi, xi in zip(self.w, x)), self.b) return act.relu() def parameters(self): return self.w + [self.b]</code></pre>

<h1>Example Usage</h1>

<p>n = Neuron(2)

x = [Value(1.0), Value(-2.0)]

y = n(x)

y.backward()</p>

<p>print(f"Neuron Output: {y.data}")

print(f"Gradient of first weight: {n.w[0].grad}")</p>

<hr />

<h3>Key Takeaways for Your Implementation</h3>

<ol>

<li><strong>Gradient Accumulation (<code>+=</code>):</strong> We use <code>+=</code> for <code>self.grad</code> because if a variable is used multiple times in a graph, its gradients must be summed (the Multivariate Chain Rule).</li>

<li><strong>Topological Sort:</strong> In the <code>backward()</code> method, you cannot calculate a node's gradient until you have processed all nodes that depend on it. A topological sort ensures we move from the output back to the inputs correctly.</li>

<li><strong>Operator Overloading:</strong> Overloading <code>__add__</code> and <code>__mul__</code> allows you to write natural Python math code (<code>a + b</code>) while the <code>Value</code> class secretly builds the graph in the background.</li>

<li><strong>Zero Grad:</strong> In a real training loop, you must call <code>grad = 0</code> between iterations, otherwise gradients from the previous step will keep adding to the new ones.</li>

</ol>

<p>This architecture is the fundamental "DNA" of PyTorch's <code>Variable</code> and <code>Function</code> classes, scaled up to handle N-dimensional tensors and GPU acceleration.</p> 12 تحركات الذهب توقعات أسعار المعدن الأصفر في مصر خلال تعاملات](https://www.mansheet.net/wp-content/uploads/2026/02/تحركات-الذهب-توقعات-أسعار-المعدن-الأصفر-في-مصر-خلال-تعاملات-360x200.jpg)

تحركات الذهب.. توقعات أسعار المعدن الأصفر في مصر خلال تعاملات الأربعاء_

# define the linear model with initial values for intercept and coefficient self.model = LinearModel(m=0.0, b=0.0)def forward(self, input): return self.model(input)

def loss(self, labels, predictions): return torch.mean((labels – predictions)**2)

def train(self, inputs, labels, epochs=100, learning_rate=0.001): for epoch in range(epochs): predictions = self.forward(inputs) loss_val = self.loss(labels, predictions)

# Zero existing gradients self.model.parameters.m.grad = 0.0 self.model.parameters.b.grad = 0.0 # Compute gradients loss_val.backward() # Optimization step (simulated) self.model.parameters.m.value -= learning_rate * self.model.parameters.m.grad self.model.parameters.b.value -= learning_rate * self.model.parameters.b.grad if epoch % 10 == 0: print(f"Epoch {epoch}, Loss: {loss_val.item()}")Initialize model and train

linear_regressor = LinearRegressor() linear_regressor.train(inputs, labels)

print(f”Trained weight: {linear_regressor.model.parameters.m.value}”) print(f”Trained bias: {linear_regressor.model.parameters.b.value}”)

Challenges and Future Considerations:

- Computation Graph Optimization: In industrial-scale frameworks like PyTorch or TensorFlow, computation graphs are often more complex. They use Tape-based or Static Graph approaches for better efficiency.

- Control Flows (If, For, While): Handling dynamic control flows during the forward pass requires advanced graph recording mechanisms.

- Complex Data Types: Supporting tensors of various dimensions and multi-input/multi-output functions.

- Higher-Order Derivatives: Calculating the derivative of a derivative.

Conclusion:

Understanding the core of a deep learning framework is about understanding Autograd. It shows that even a complex-looking process like backpropagation is just a series of chain-rule operations applied systematically. You could extend this mini-framework to handle multi-dimensional tensors, a library of more complex functions (like

torch.exportorch.sin), and more sophisticated optimization algorithms.### Building a Custom Autograd System (Mini-PyTorch) Creating an automatic differentiation system from scratch is an excellent way to grasp how deep learning frameworks compute gradients. In this guide, we’ll build a simple scalar-based autograd engine similar to

micrograd(by Andrej Karpathy), which focuses on the core mechanics without the complexity of multi-dimensional tensors.

Core Concept: The Computational Graph

Autograd works by building a Directed Acyclic Graph (DAG) during the “forward pass.”

- Nodes: Represent operands (values/scalars).

- Edges: Represent the operations that connect them.

- Backpropagation: We traverse this graph in reverse to apply the Chain Rule.

Step 1: The

ValueClassThis class will store a scalar value, its gradient, and a reference to the operations that created it.

python import math

class Value: def init(self, data, _children=(), _op=”): self.data = data self.grad = 0.0 # Hidden state: d(Result)/d(self) self._prev = set(_children) # Previous nodes in the graph self._op = _op # The operation that produced this node self._backward = lambda: None # Function to compute local gradient

def __repr__(self): return f"Value(data={self.data}, grad={self.grad})" # Addition: c = a + b def __add__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data + other.data, (self, other), '+') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For addition, dc/da = 1. self.grad += 1.0 * out.grad other.grad += 1.0 * out.grad out._backward = _backward return out # Multiplication: c = a * b def __mul__(self, other): other = other if isinstance(other, Value) else Value(other) out = Value(self.data * other.data, (self, other), '*') def _backward(): # Chain rule: dL/da = dL/dc * dc/da. For multiplication, dc/da = b. self.grad += other.data * out.grad other.grad += self.data * out.grad out._backward = _backward return out # Power: c = a^n def __pow__(self, other): assert isinstance(other, (int, float)), "Supporting only int/float powers" out = Value(self.data**other, (self,), f'^{other}') def _backward(): # Power rule: d(x^n)/dx = n * x^(n-1) self.grad += (other * (self.data**(other-1))) * out.grad out._backward = _backward return out def relu(self): out = Value(0 if self.data < 0 else self.data, (self,), 'ReLU') def _backward(): self.grad += (1.0 if out.data > 0 else 0) * out.grad out._backward = _backward return out def backward(self): """Topological sort to ensure we compute gradients in the right order.""" topo = [] visited = set() def build_topo(v): if v not in visited: visited.add(v) for child in v._prev: build_topo(child) topo.append(v) build_topo(self) # Initial gradient is 1 (dOut/dOut) self.grad = 1.0 for node in reversed(topo): node._backward() # Boilerplate for operator overloading def __neg__(self): return self * -1 def __sub__(self, other): return self + (-other) def __rmul__(self, other): return self * other def __truediv__(self, other): return self * (other**-1)

Step 2: Testing the Engine

Let’s see if we can calculate the gradient of a simple function: $f(x, y) = (x \cdot y) + y^2$.

python x = Value(2.0) y = Value(3.0)

f = (x * y) + y^2

f = (2 * 3) + 3^2 = 6 + 9 = 15

f = (x * y) + (y ** 2)

f.backward()

print(f”Result: {f.data}”) # Should be 15.0 print(f”df/dx: {x.grad}”) # df/dx = y = 3.0 print(f”df/dy: {y.grad}”) # df/dy = x + 2y = 2 + 6 = 8.0

Step 3: Minimal Neural Network Logic

Now that we have the autograd engine, we can build a Neuron and Layer.

python import random

class Neuron: def init(self, nin): self.w = [Value(random.uniform(-1, 1)) for _ in range(nin)] self.b = Value(random.uniform(-1, 1))

def __call__(self, x): # w * x + b act = sum((wi*xi for wi, xi in zip(self.w, x)), self.b) return act.relu() def parameters(self): return self.w + [self.b]Example Usage

n = Neuron(2) x = [Value(1.0), Value(-2.0)] y = n(x) y.backward()

print(f”Neuron Output: {y.data}”) print(f”Gradient of first weight: {n.w[0].grad}”)

Key Takeaways for Your Implementation

- Gradient Accumulation (

+=): We use+=forself.gradbecause if a variable is used multiple times in a graph, its gradients must be summed (the Multivariate Chain Rule). - Topological Sort: In the

backward()method, you cannot calculate a node’s gradient until you have processed all nodes that depend on it. A topological sort ensures we move from the output back to the inputs correctly. - Operator Overloading: Overloading

__add__and__mul__allows you to write natural Python math code (a + b) while theValueclass secretly builds the graph in the background. - Zero Grad: In a real training loop, you must call

grad = 0between iterations, otherwise gradients from the previous step will keep adding to the new ones.

This architecture is the fundamental “DNA” of PyTorch’s

VariableandFunctionclasses, scaled up to handle N-dimensional tensors and GPU acceleration.آخر تحديثفيأخبار مصر -

بشرى لمرضى القولون.. فوائد مذهلة لتناول الزبادي في السحور تقضي على الالتهابات وتحسن الهضم وظائف الجسم وفقًا لعميد معهد القلب الأسبقآخر تحديثفيأخبار مصر

بشرى لمرضى القولون.. فوائد مذهلة لتناول الزبادي في السحور تقضي على الالتهابات وتحسن الهضم وظائف الجسم وفقًا لعميد معهد القلب الأسبقآخر تحديثفيأخبار مصر -

جولة تفقدية.. رئيس الوزراء يتابع مشروع تطوير الطريق الدائري وصيانة كوبري 6 أكتوبرآخر تحديثفيأخبار مصر

جولة تفقدية.. رئيس الوزراء يتابع مشروع تطوير الطريق الدائري وصيانة كوبري 6 أكتوبرآخر تحديثفيأخبار مصر -

دراما الواقع.. كيف وصفت الصحافة الفلسطينية دور مسلسل صحاب الأرض في حماية الحقيقة؟آخر تحديثفيأخبار مصر

دراما الواقع.. كيف وصفت الصحافة الفلسطينية دور مسلسل صحاب الأرض في حماية الحقيقة؟آخر تحديثفيأخبار مصر -

تحذير الأرصاد.. رياح باردة وأمطار تضرب عدة محافظات مع عودة الأجواء الشتوية ونقص الحرارةآخر تحديثفيأخبار مصر

تحذير الأرصاد.. رياح باردة وأمطار تضرب عدة محافظات مع عودة الأجواء الشتوية ونقص الحرارةآخر تحديثفيأخبار مصر -

انخفاض كبير بالحرارة.. الأرصاد تحدد ملامح طقس الأيام المقبلة حتى الأحد الأخير من 2025آخر تحديثفيأخبار مصر

انخفاض كبير بالحرارة.. الأرصاد تحدد ملامح طقس الأيام المقبلة حتى الأحد الأخير من 2025آخر تحديثفيأخبار مصر -

خطط استثمارية جديدة.. رئيس الوزراء يتابع مشروعات وزارة البترول في مصر المستهدفة قريباًآخر تحديثفيأخبار مصر

خطط استثمارية جديدة.. رئيس الوزراء يتابع مشروعات وزارة البترول في مصر المستهدفة قريباًآخر تحديثفيأخبار مصر -

رسميًا 7 ملايين سلة غذائية.. انطلاق مبادرة أبواب الخير في رمضان 2025 | تعرف على التفاصيل بالإنفوجرافآخر تحديثفيأخبار مصر

رسميًا 7 ملايين سلة غذائية.. انطلاق مبادرة أبواب الخير في رمضان 2025 | تعرف على التفاصيل بالإنفوجرافآخر تحديثفيأخبار مصر